Image create by GPT Image 1.5

The Citrini “2028 Global Intelligence Crisis” Is a Masterclass in First-Order Thinking

TL;DR: A viral “post from the future” about AI crashing the economy spooked markets and lit up every finance newsletter. It’s vivid writing. It’s also analytically thin. Here’s what it got right, where the logic breaks down, and why doing exercises like this still matters, if we’re willing to be honest about the rigor.

Everybody’s Talking About It

You’ve probably seen this one by now. On February 22, Citrini Research published a roughly 7,000-word scenario piece called “The 2028 Global Intelligence Crisis,” written as a fictional macro memo from June 2028. The premise: AI capability jumps in 2026, companies replace white-collar workers, profits boom, markets hit all-time highs, and then the whole thing comes apart because the people who used to buy things no longer have the income to buy things.

It’s written well. I’ll give it that. The fake Bloomberg headlines, the named tickers, the fabricated JOLTS prints. It reads like a Tom Clancy novel set inside a Goldman Sachs research deck. And it clearly hit a nerve. Software and payments stocks sold off the Monday after it circulated, and Bloomberg ran a piece on the author being surprised by the market reaction. My inbox filled up with people asking me what I thought.

So I did what I usually do. I grabbed an amazing bottle of red wine from one of my favorite wineries in Paso Robles, consumed a glass or two (or three), and thought about this deeply. My initial read: vivid storytelling, weak analysis.

Here’s what I found.

What It Actually Got Right

I want to be fair. There are pieces of this that land.

The section on private credit and insurance company balance sheets is the strongest part of the whole article. The way PE firms have acquired life insurers, used annuity deposits as permanent capital, and built offshore reinsurance structures for regulatory arbitrage is a real risk that exists today. Strip away the AI framing and that section could stand on its own as a credible warning about financial plumbing most people ignore.

The SaaS pricing dynamic is also sharp. It doesn’t matter whether a company can actually rebuild Salesforce in-house. What matters is whether the procurement manager believes they can. That belief alone compresses pricing. That’s a genuine insight about how perception of AI capability damages margins before the technology actually delivers.

And the core observation about income distribution is directionally correct. The top 10% of earners drive roughly half of US consumer spending. If that cohort faces serious income disruption, you don’t need mass unemployment to get a demand problem. That’s worth thinking about.

Now for the rest.

The Central Contradiction Nobody Seems to Notice

The biggest problem with the Citrini piece is that it wants to have it both ways. On one hand, companies achieve record profits by firing workers, pushing the S&P to 8,000. On the other hand, the consumer economy is collapsing because those same fired workers have stopped spending.

To be fair, the memo gestures at a lag. Margins expand first, the spending collapse comes later, delayed by savings buffers. But it never models what fills the final-demand gap during the melt-up. If consumer spending is already weakening beneath the surface, who is buying enough to generate those record corporate earnings? And how do the same forces that supposedly erode pricing power across software and intermediation simultaneously support an index-level surge to 8,000? The memo’s story needs a mechanism there, not just a convenient sequence of events.

And there’s a second contradiction stacked right on top of the first. The piece argues that AI makes coding so cheap that enterprises can replicate mid-market SaaS products in weeks, creating a “race to the bottom” on pricing. But it also argues that tech companies are achieving infinite leverage and record margins.

Now, it’s possible for margin collapse in one segment (SaaS, intermediation) to coexist with record margins in another (compute owners, model providers). But the memo blurs who exactly is earning those record profits. It slides between “tech” as a sectoral winner and “software” as a sector being commoditized, treating them as the same story when they’re opposite stories. You can’t sustain a broad index-level earnings boom when the majority of the companies in that index are in a pricing knife-fight. Perfect competition drives profits toward zero. Pick one.

“Ghost GDP” Sounds Smart. It Isn’t.

The piece has this rhetorical mic-drop moment: “We probably could have figured this out sooner if we just asked how much money machines spend on discretionary goods. Hint: it’s zero.”

The implication creates what Citrini calls “Ghost GDP,” output that shows up in national accounts but never circulates through the real economy. It’s a catchy phrase. People are quoting it everywhere. It’s also bad economics.

Machines don’t own themselves. Humans and corporations do. When a company saves $150,000 by replacing an analyst with an AI agent, that money doesn’t vanish. It goes to shareholders through dividends and buybacks (and shareholders are humans who spend, invest, and pay taxes). Or it goes to capital expenditures, like building GPU clusters in North Dakota, which require land, copper, steel, power plants, cooling systems, and construction workers. That’s money going directly into human hands, just in different sectors.

The real question is about distribution and velocity. If capital income rises and labor income falls, what happens depends on who receives the money, how much they save versus spend, and what the tax and transfer system does about it. That’s a distribution story and a policy story. But Citrini presents it as if income literally disappears from the economy, which it doesn’t. It’s narrated as if it’s an accounting identity story, when it’s actually a policy story. And that distinction matters, because policy stories have levers you can pull.

“Friction Goes to Zero” Confuses Rent-Seeking with Risk Management

The article’s most ambitious claim is that AI agents will strip all intermediation from the economy. Insurance renewals, travel bookings, payment rails, real estate commissions, delivery networks. Everything built on human limitations evaporates.

Some of that is directionally true. AI will absolutely compress margins on services built primarily on information asymmetry and consumer inertia. I’ve seen it already in my own work with law firms. But the piece makes a critical mistake: it treats all intermediation as rent extraction, when a meaningful portion is actually risk management, compliance, dispute resolution, and trust infrastructure.

Here’s what I mean. The piece claims AI agents will route around credit card interchange fees by switching to stablecoins on Solana. Sure, on-chain settlement fees are low. But the all-in cost stack for consumer commerce includes KYC/AML compliance, fraud protection, chargebacks, consumer protection laws, identity verification, and credit risk. Those functions don’t disappear because a machine is doing the clicking. They get repriced or rebundled. But “fees collapse to near-zero” is not the natural outcome. The more likely outcome is that the fees migrate to whoever controls the new agent layer.

And that’s the point the piece completely misses. If AI agents become the default interface for commerce, the agent layer itself becomes the new intermediary. Consumers will standardize on a small number of agent platforms because of trust, privacy, indemnification, and switching costs. The piece assumes the world becomes perfectly contestable. Platform economics suggests the opposite is just as likely: intermediation gets reconstituted one layer up.

The DoorDash scenario is even weaker. The claim that “a competent developer could deploy a functional competitor in weeks” ignores everything that makes a delivery network work beyond the app: driver supply, restaurant partnerships, insurance and liability, food safety compliance, local permitting, and the cold-start problem of building a two-sided marketplace. Coding an app is maybe 5% of the job.

The 24-Month Timeline Is Fantasy

The scenario asks you to believe that in roughly two years, the US goes from near all-time highs to 10.2% unemployment, a 38% S&P drawdown, a mortgage crisis in prime lending, an IMF intervention in India, and what amounts to a structural depression.

Anyone who has worked inside a Fortune 500 company should raise an eyebrow here. Enterprise procurement cycles, compliance requirements, security audits, integration testing, change management, and good old-fashioned organizational inertia create massive friction between “this is technically possible” and “we’ve actually deployed it at scale.” Banks still run on COBOL from the 1970s. The physical world does not move at the speed of an LLM token generator.

I see this gap every week in my work with law firms. I’ve watched a partner get genuinely excited about an AI demo on Tuesday and then spend six months navigating ethics committees, IT security reviews, and practice group politics before a single workflow changes. Multiply that across every regulated industry and the Citrini timeline doesn’t just look aggressive. It looks detached from how organizations actually adopt technology.

And there’s another problem with the timeline: the piece assumes government just watches it happen. We saw during 2008 and 2020 that governments respond within weeks to existential economic threats. TARP, the CARES Act, emergency rate cuts, quantitative easing. You don’t have to think government is efficient to recognize that a 10.2% unemployment rate would produce an overwhelming political mandate for action long before it reached that level. The memo actually acknowledges this, noting the government is “starting to consider proposals.” But it treats that response as too little, too late, without ever defending why the policy reaction function would be unusually slow relative to the speed of the shock. That’s a political assumption doing a lot of heavy lifting, and it deserves more than a hand wave.

The One-Sided Ledger

This is where the piece becomes most frustrating, because it almost gets somewhere interesting and then stops thinking.

The entire argument focuses on how AI destroys incomes. But it completely ignores how AI destroys prices. If AI truly removes all the friction the piece describes, coding apps for free, eliminating commissions, bypassing intermediaries, then the cost of goods and services should plummet. In a world where production costs approach zero, the purchasing power of whatever money people still have goes up dramatically.

The piece presents a pure demand-destruction story without acknowledging that the same force destroying jobs is also reducing the cost of living. That’s not a minor omission. It’s the entire other side of the ledger.

Now, I’ll be fair. A rapid drop in wages combined with fixed 2024-era mortgage payments is a legitimate concern. Economists call that debt-deflation, and it’s genuinely scary. But if the cost of energy, housing construction, healthcare, and basic goods is also plummeting, that is exactly the scenario where a central bank intervenes aggressively. The bear case needs to explain why the saved dollars don’t show up as lower prices, higher real purchasing power, or new demand in adjacent categories. And it needs to explain why government would allow a debt-deflation spiral without deploying modern monetary tools. Citrini never even tries.

“This Time Is Different” Is Always a Risky Bet

The piece spends one paragraph dismissing two centuries of evidence that technological disruption creates new categories of employment. Its argument boils down to: AI can do the new jobs too.

That’s exactly what pessimists have said about every major technology. Mechanized looms. Computerization. The internet. The new jobs didn’t emerge because people predicted them in advance. They emerged because human preferences, social structures, and economic incentives created demand for things nobody knew they wanted. The authors are asking you to believe that for the first time in recorded history, human creativity and social organization will fail to generate new forms of economic activity.

Maybe. But asserting it in a paragraph doesn’t make it an argument.

Why This Exercise Still Matters

I’ve spent a lot of words explaining why this piece doesn’t hold up. And I want to be clear: that doesn’t mean the exercise was pointless.

We need more people thinking about what happens if AI-driven productivity gains accrue narrowly while the households that drive consumer spending face income insecurity. That’s a real vulnerability. The connection between technology disruption and financial plumbing, the private credit exposure, the insurance balance sheet risk, that’s under-explored and worth serious attention.

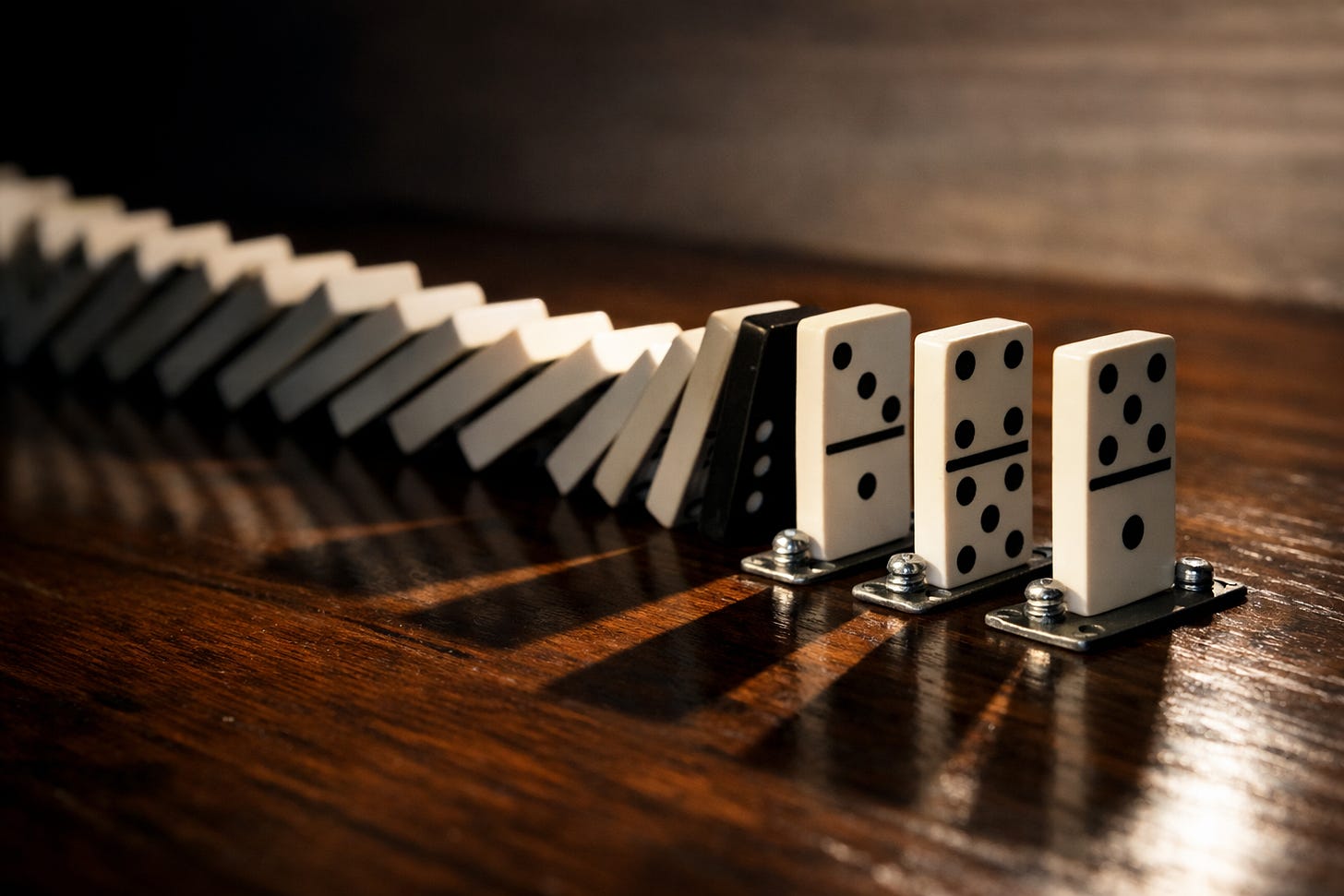

But here’s the thing. Scenario planning only has value when it’s rigorous. When you stack every negative assumption in the same direction, let every domino fall perfectly, and ignore every counterbalancing force the economy has, you haven’t built a scenario. You’ve written a screenplay.

Good scenario analysis forces you to let your own logic produce counterbalancing forces, not just the catastrophic ones. It asks: if AI is this capable, what else gets cheaper? If this many people lose jobs, what does government do? If intermediation margins compress, where do the savings go? The best thought exercises make you uncomfortable in both directions, not just one.

None of us knows the future. I certainly don’t. But when we do this kind of work, we owe it to ourselves and to the people who read it to pressure-test our assumptions. To model second and third-order effects, not just first-order ones. To acknowledge the brakes and stabilizers, even when the scary version makes for better reading.

What Would Make Me Update

If I’m wrong, and the Citrini scenario is closer to reality than I think, we should be able to see the early signals. Here’s what I’d watch: white-collar job openings declining sharply while blue-collar openings hold steady. Enterprise software renewal rates dropping meaningfully, not just in long-tail SaaS but in systems of record. Private credit marks diverging significantly from public market comps in the same sectors. And consumer spending softening among the top income decile while headline unemployment stays low. If those four things start happening simultaneously in the next 12 months, the Citrini thesis deserves a second, much harder look.

But a scenario isn’t useful until you know what would falsify it. And a thought exercise that doesn’t name its own kill switches is just a story dressed up as strategy.

The Citrini piece correctly identifies a first-order effect: AI can automate cognitive tasks and save money. Then it assumes AI operates with perfect, frictionless efficiency when destroying jobs but assumes the economy operates with maximum friction when trying to adapt. That’s not analysis. That’s choosing a conclusion and working backwards.

We can do better. And if AI is going to be as significant as most of us believe, we’re going to have to.

I'm Steve Smith, founder of Intelligence by Intent. I work with managing partners, general counsel, and executive teams on AI strategy, which means I spend most of my time pressure-testing claims about what this technology will and won't do. If that's useful to you, I write about it daily at smithstephen.com.

Steve Smith

Legal AI consultant with 25+ years executive leadership. Wharton MBA. Helping law firms adopt AI with CLE-eligible workshops and implementation sprints.

Learn more about SteveThis article was originally published on Intelligence by Intent on Substack . Subscribe for weekly insights on AI adoption in legal practice.